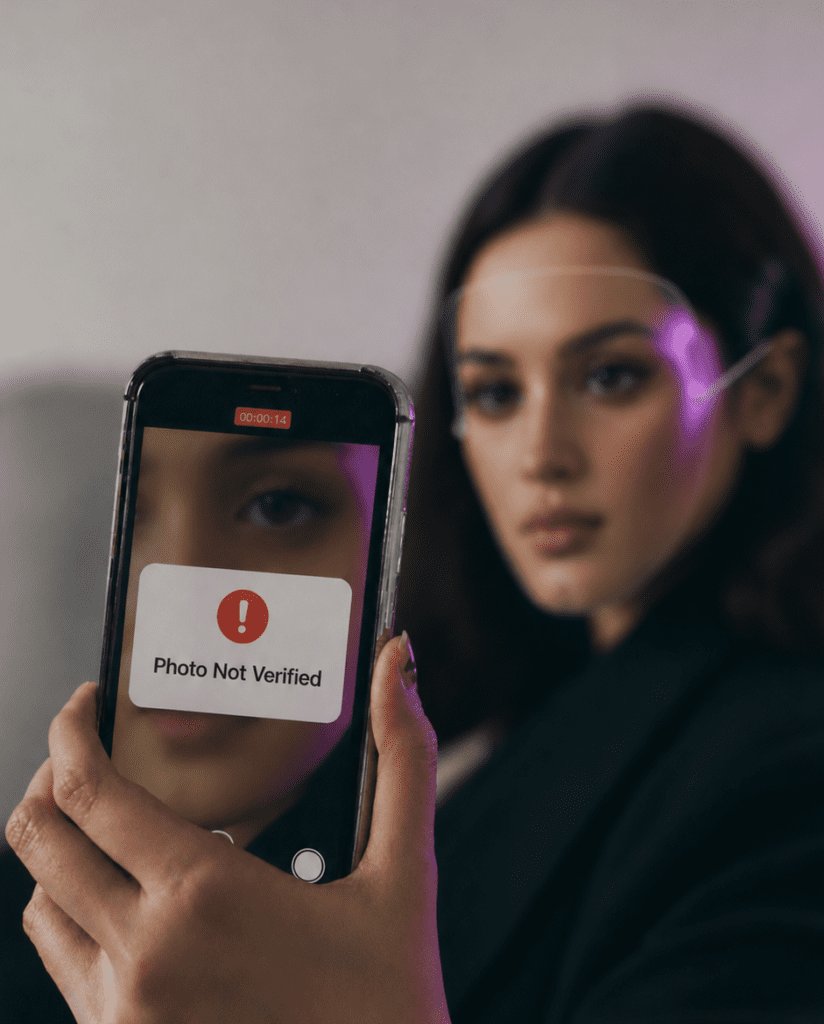

Deepfakes are no longer just fodder for sci-fi thrillers. Today almost every sector of our world is threatened by deepfakes. Take the example of the 2024 deepfake case in Hong Kong, where a fraudster used a convincing synthetic video of a CFO to trick employees into transferring USD 25 million to offshore accounts, showing clearly how AI-generated media can devastate corporate security.

And it’s not just the corporate sector, but many experts now warn that deepfakes are being used for financial fraud, political misinformation, and identity scams around the globe. In India, for example, manipulated videos of public figures have been circulated widely, leading law enforcement to invoke forgery and cybercrime provisions under the Bharatiya Nyaya Sanhita, 2024 and Information Technology Act, 2000.

Not Just a Social Media Problem Anymore

The rise of generative AI has made deepfakes fast, cheap, and alarmingly accessible. Once the domain of specialist labs; manipulated videos, voices, and images can now be created with just a smartphone. Cybersecurity experts note that deepfake attacks have surged alongside general AI advancement, and remains, along with phishing and malware,one of the most significant AI-powered threats.

Across sectors, the harms of deepfakes are real and immediate.

- In finance, fake executive calls and AI-generated voices are used to bypass authentication.

- In elections, synthetic media can be deployed to manipulate public opinion.

- In healthcare, doctored videos or audio could misrepresent medical advice or fraudulently influence patients.

- Even brand and personal reputations are at risk when deepfake endorsements go viral.

The result? Organisations and governments alike are clamouring for tools that can reliably distinguish what is real from what is synthetic.

Patent Gold Rush: What Big Tech Is Filing

The response from Big Tech goes beyond whitepapers and open-source models. Companies such as Google and security firms like McAfee are actively filing patents on deepfake detection methods. This signals that this technology isn’t just a research problem, it’s a commercial and infrastructural issue.

For example,

- Google has at least one published application for methods and apparatus to detect deepfake content that uses computer vision techniques to analyse multimedia patterns and determine authenticity.

- McAfee’s patents propose multi-model ensemble approaches to improve confidence in detection systems.

- Other entities, including Trust Stamp, have pursued patents for systems that detect injection attacks where deepfakes are inserted into biometric streams.

This is a growing trend across sectors because patents protect technical infrastructure, not just lines of code. That means detection pipelines, watermarking schemes, provenance tracking, and AI-based authenticity scoring systems are becoming IP assets.

What Exactly Is Being Patented

To non-technical founders, patents can seem impenetrable. But the core ideas being protected are straightforward:

- Signal-based detection: Extracting patterns in pixel, audio, or metadata that betray synthetic generation versus genuine content.

- Model fingerprinting and watermarking: Embedding a signature that lets verifiers trace the origin of media.

- Blockchain-based provenance systems: Immutable logs that show the history of a digital file’s creation and modifications.

- Multi-layer verification engines: Systems combining several AI models to reach higher reliability.

In each case, the patentable contribution is a software system linked to a technical workflow that produces reliable detection, not a mere abstract idea.

What Makes These Patents Strategically Valuable?

- First, defensive protection is key. By holding patents on detection systems, companies can avoid liability and secure freedom to operate in markets where real-time verification tools become mandated.

- Second, these patents can be licensed to regulators, platforms, and enterprises, turning deepfake verification into a service layer that others depend on. Governments introducing digital authenticity requirements could make such patents essential infrastructure.

- Third, patents can help establish standards. Whoever holds key patents may influence which detection methods become normative, shaping ecosystem design and compliance frameworks.

- Lastly, as AI governance and transparency laws loom larger, having patent-protected tools ready positions companies to respond to future regulatory requirements with proprietary, scalable solutions.

Where India Fits In

India’s digital milieu makes it a high-risk, high-impact market for deepfake abuse. With over 800 million internet users and rapid AI adoption across fintech, media, and enterprise platforms, generative content misuse is already on the radar of cybersecurity specialists.

Additionally, Indian firms are not staying on the sidelines. Patent records show deepfake detection systems filed by Indian institutions and companies, including AI-enhanced classification methods and apparatus for mitigating deepfake attacks.

Patent Law Angle: Are Deepfake Detection Tools Patentable in India?

One common misunderstanding is that software is inherently unpatentable in India due to Section 3(k) of the Patents Act, 1970. In reality, deepfake detection tools can be patentable if framed as technical systems delivering a technical contribution.

This aligns with judicial guidance such as Ferid Allani v. Union of India, which clarified that software with a technical effect can satisfy patentability criteria, provided the claimed invention is not an abstract algorithm but a system or process that produces a demonstrable technical improvement.

Applied to deepfake detection, this means:

- Claims should highlight system architecture, data flow, and interaction with hardware or networks.

- Simply stating “an AI model for detection” is not enough; the how and the effect must be articulated in technical terms.

Thus, a thoughtful claim strategy can place these tools squarely within patentable subject matter.

What This Means for Indian Startups

For Indian startups building AI safety, cybersecurity, or media verification platforms, here are a few practical takeaways:

- File early: The deepfake detection space is moving fast; early priorities can secure advantageous filing dates. Proactive patent filings help create defensible IP, attract enterprise and government clients who value trust infrastructure, and position Indian solutions in global markets.

- Structure system claims: System-level patent claims typically fare better than claims framed solely around abstract algorithms.

- Use PCT for scalability: Patent Cooperation Treaty (PCT) filings let Indian startups keep options open for US, Europe, and other markets.

- Don’t rely only on trade secrets: Unlike trade secrets, patents provide enforceable exclusive rights that serve well in enterprise sales and partnerships.

Policy Meets Patents: The Road Ahead

As governments and the general public pay more attention to AI governance, patent portfolios in verification tech will quietly shape compliance tools. Digital identity laws, platform liability rules, and national security provisions may reference patented detection standards. In other words: while laws take time to evolve, patents will define the tools that enforce the rules.

Conclusion

Patents for deepfake detection are not just defensive tech, but part of a broader trust infrastructure economy. Deepfakes threaten what we trust, and there is now a need for patents that protect that trust.

Author: Kanchan Raichandani (Intern)

Thank you for reading our blog! We’d love to hear from you!

- Are you Interested in IP facts?

- Would you like to know more about how IP affects everyday lives?

- Have any questions or topics you’d like us to cover?

Send us your thoughts at info@thepalaw.com. We’d love to hear your thoughts!